Why AI vendor selection fails at the executive level

AI vendors sell possibility. Executives fund reality. When evaluation is driven by demos, leaders approve tools before they approve the conditions required for success.

Three patterns drive failure. Leaders approve pilots without a measurable outcome. Teams underestimate data and integration work. Security and legal reviews happen late, when momentum makes stopping politically hard.

The fix is disciplined. Treat AI vendor selection as investment governance.

The AI investment governance model

Use a consistent decision sequence. It reduces debate, keeps teams focused, and creates board-ready clarity. If the sequence breaks, outcomes become optional and spend becomes permanent.

- Outcome. What measurable change are you funding.

- Data dependency. What data is required, who owns it, and how it is accessed.

- Risk posture. Security, privacy, regulatory exposure, and model risk.

- Total cost curve. Year one cost plus scale cost, support cost, and vendor services.

- Exit optionality. What it takes to leave, migrate, or switch models.

Red flags in AI vendor narratives

Hype sounds credible because it mixes truth with omission. These red flags predict pain during scale.

- Outcome avoidance. The vendor talks features but avoids measurable business impact.

- Data vagueness. Quick value claims without specifying data access, quality, and ownership.

- Security deflection. Security answers stay high level or get delayed.

- Integration minimization. Plug-and-play promises in environments where nothing is plug-and-play.

- Services dependence. Success depends on ongoing professional services rather than internal capability.

- Lock-in pressure. Pricing or architecture nudges long terms before value is proven.

The AI vendor scorecard executives should use

Scorecards stop emotional decisions and create shared language across business, technology, finance, and risk. Keep it short enough to run quarterly.

- Outcome alignment. Clear KPI change and timeframe.

- Data readiness. Access, quality, lineage, and ownership for required datasets.

- Security and compliance. Controls, audit evidence, incident handling, and data retention.

- Model and operational risk. Monitoring, drift management, human review needs, and escalation.

- Total cost. Licensing plus usage plus integration plus support plus services.

- Operating impact. Workflow change, training effort, and adoption requirements.

- Exit path. Portability of data, prompts, models, and integrations.

Decision rights and approval thresholds

AI investments introduce new risk types. Define who decides what, and when approvals escalate.

- Business owner. Accountable for outcome and adoption.

- Technology owner. Accountable for integration, reliability, and operating impact.

- Security and risk owner. Accountable for controls, exceptions, and evidence.

- Finance partner. Validates total cost curve and renewal leverage.

Set approval thresholds up front. High-risk data, customer impact, or material spend needs executive review, not informal consensus.

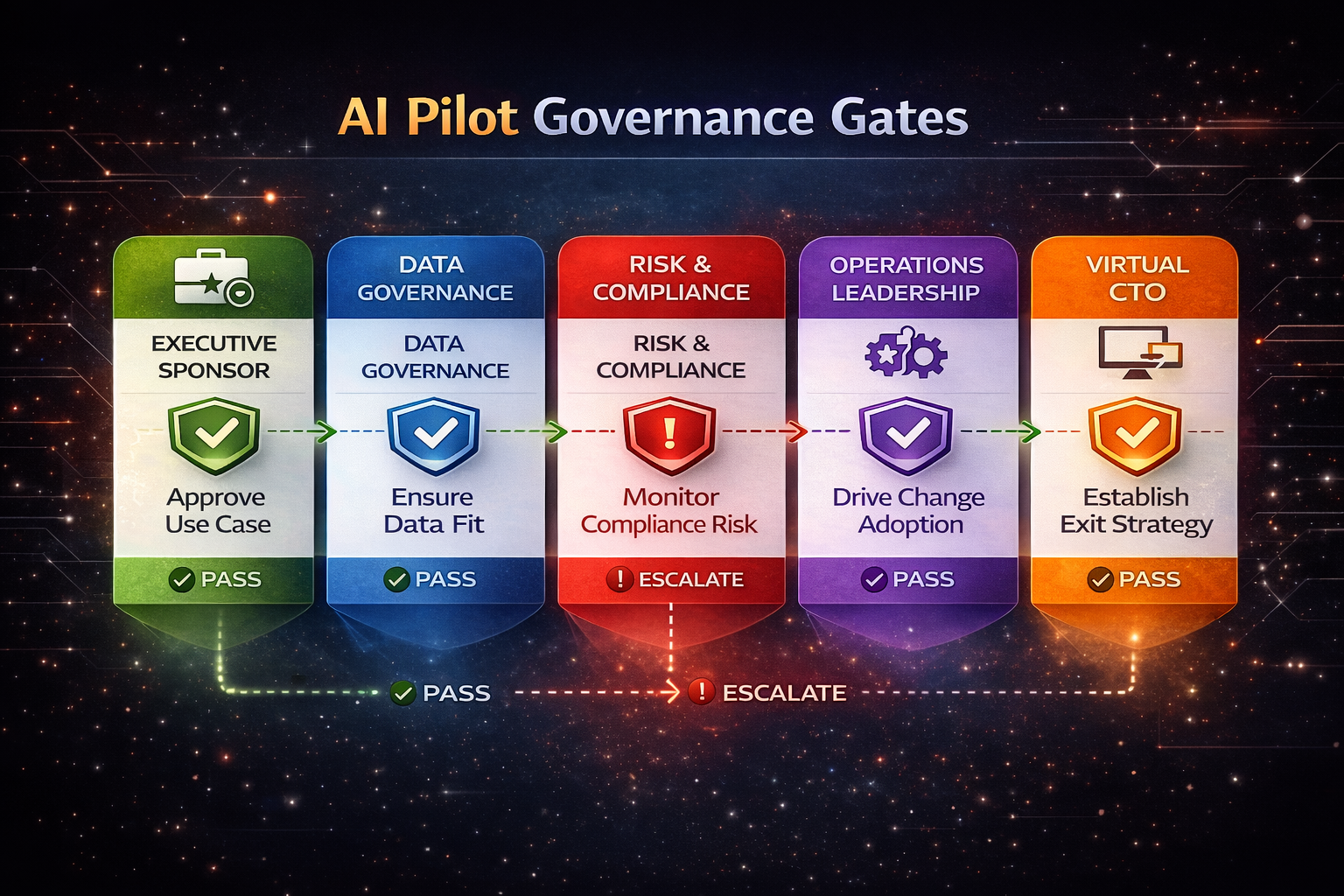

A 90-day pilot governance structure that works

Pilots fail when they become permanent. Prevent that with gates and stop rules.

Gate 1. Before kickoff

- Outcome statement and KPI baseline.

- Named owners and decision rights.

- Data access confirmed with classification and controls.

- Exit criteria defined.

Gate 2. Mid-pilot review

- Leading indicators such as adoption, cycle time, and quality.

- Security evidence and exception status.

- Cost tracking against the scale curve.

Gate 3. Scale decision

- KPI movement under real operating conditions.

- Support model and monitoring readiness.

- Contract terms aligned to value and exit options.

What boards should expect to see

Boards do not need model internals. They need confidence that leadership governs spend and risk.

- Outcome clarity and KPI movement.

- Risk posture, exceptions, and remediation progress.

- Total cost curve and renewal leverage.

- Clear owners and decision cadence.

This oversight becomes much stronger when leaders use the same discipline across broader vendor decisions. That is why this article connects naturally to vendor governance without unmanaged sprawl and to data foundations required before AI scale.

Frequently Asked Questions

How should leaders evaluate AI vendors?

Leaders should evaluate AI vendors through a governance model tied to outcomes, data readiness, risk controls, total cost, and exit options rather than feature lists or demo quality.

What causes AI vendor evaluations to fail?

Evaluations fail when leaders approve tools before measurable outcomes, underestimate data and integration work, and delay security or legal review until late in the process.

What should an executive AI vendor scorecard include?

The scorecard should cover outcome alignment, data readiness, security and compliance, model and operational risk, total cost, operating impact, and exit path.

How should leaders govern AI pilots?

Pilots should run with gates before kickoff, mid-pilot review checks, and scale decision criteria. Each gate should include owners, stop rules, and clear exit criteria.

What should boards expect to see from AI vendor oversight?

Boards should see outcome clarity, KPI movement, risk posture, total cost curve, renewal leverage, named owners, and a clear decision cadence.

Want AI vendor decisions that hold up under board scrutiny

If demos keep driving decisions, pilots drift without outcomes, or risk reviews happen late, a short working session will produce a vendor scorecard, pilot gates, and an approval model leaders can run.

Book a consultation